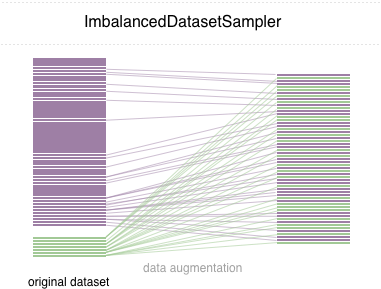

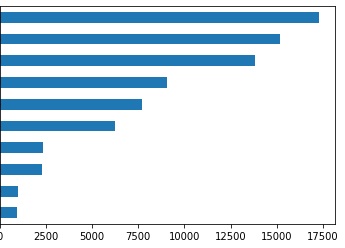

GitHub - ufoym/imbalanced-dataset-sampler: A (PyTorch) imbalanced dataset sampler for oversampling low frequent classes and undersampling high frequent ones.

GitHub - ufoym/imbalanced-dataset-sampler: A (PyTorch) imbalanced dataset sampler for oversampling low frequent classes and undersampling high frequent ones.

GitHub - ufoym/imbalanced-dataset-sampler: A (PyTorch) imbalanced dataset sampler for oversampling low frequent classes and undersampling high frequent ones.

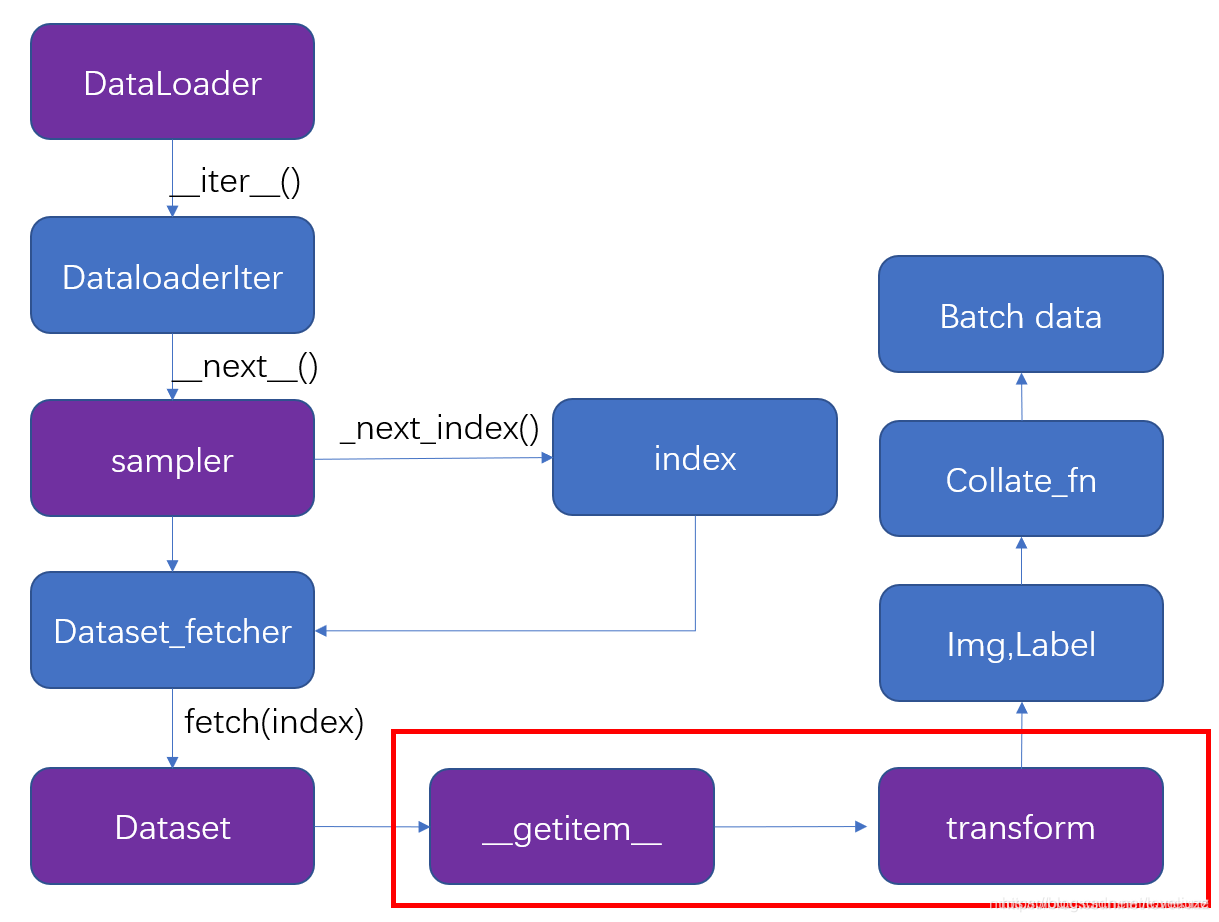

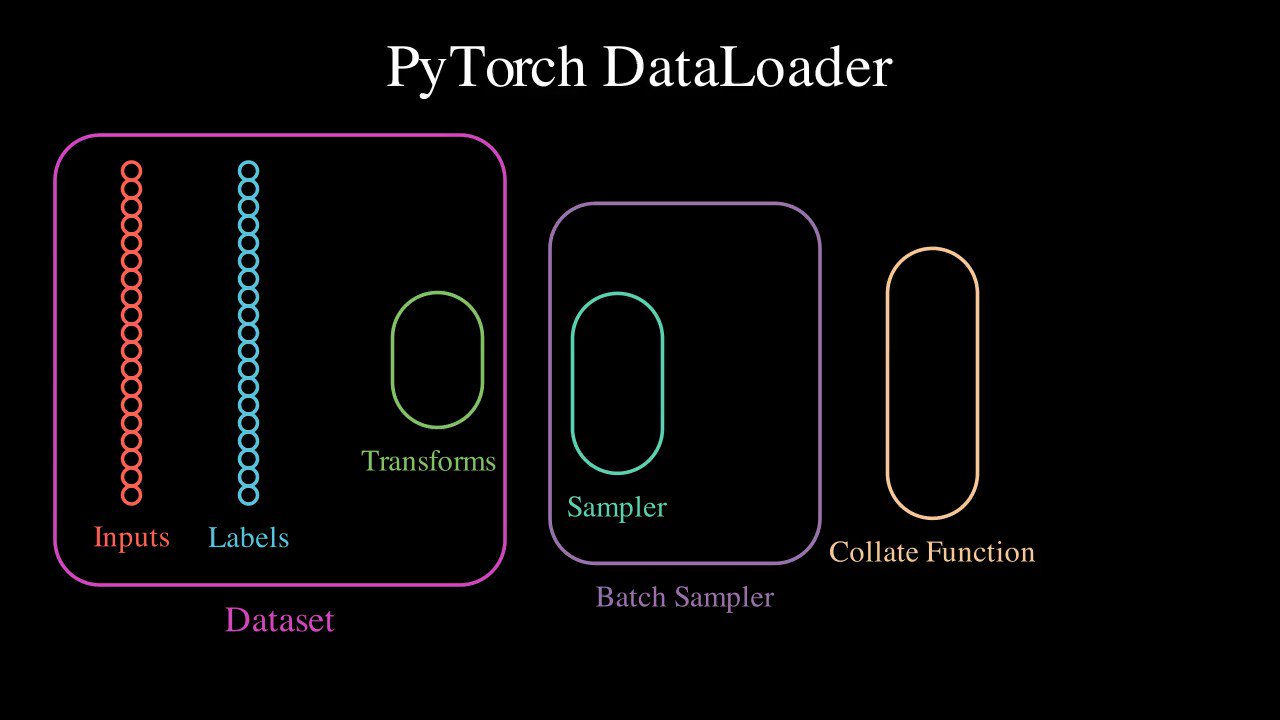

PyTorch Dataset, DataLoader, Sampler and the collate_fn | by Stephen Cow Chau | Geek Culture | Medium

GitHub - khornlund/pytorch-balanced-sampler: PyTorch implementations of `BatchSampler` that under/over sample according to a chosen parameter alpha, in order to create a balanced training distribution.

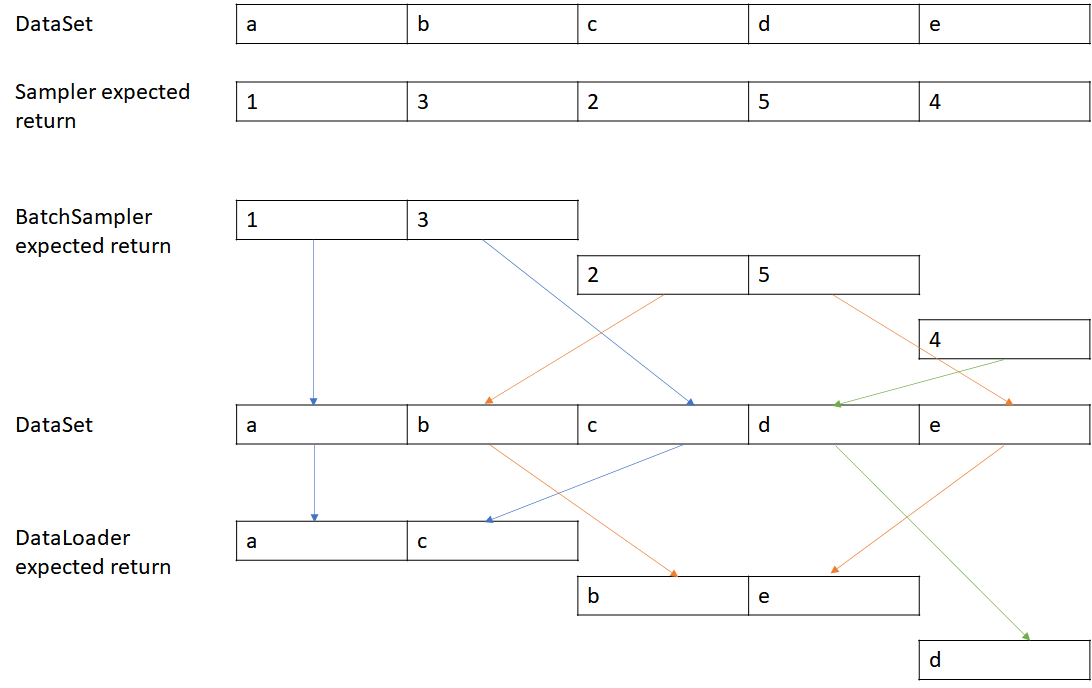

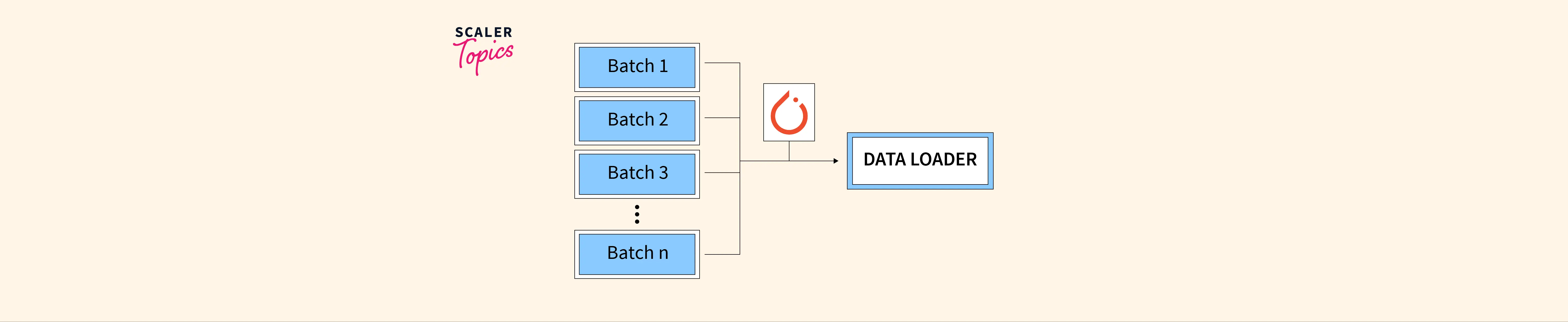

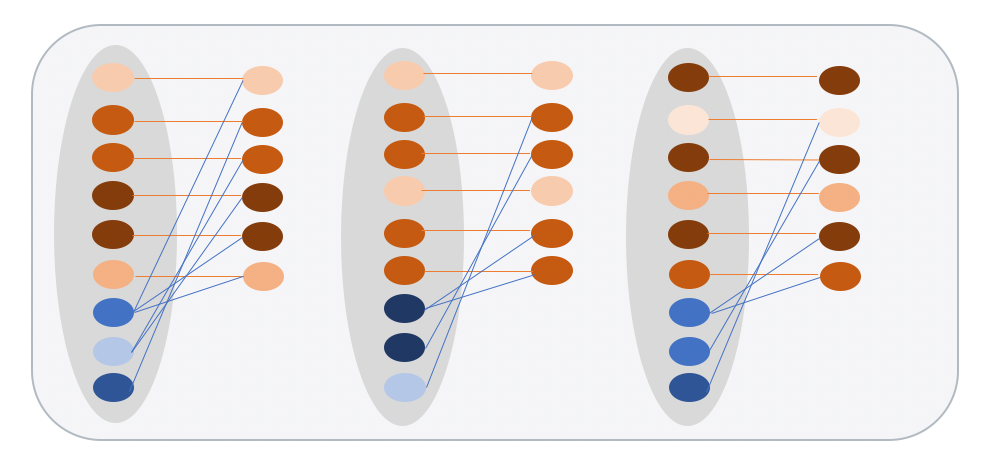

Scott Condron on X: "Here's an animation of a @PyTorch DataLoader. It turns your dataset into a shuffled, batched tensors iterator. (This is my first animation using @manim_community, the community fork of @

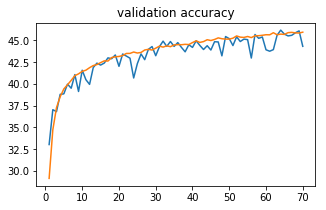

![PyTorch [Basics] — Sampling Samplers | by Akshaj Verma | Towards Data Science PyTorch [Basics] — Sampling Samplers | by Akshaj Verma | Towards Data Science](https://miro.medium.com/v2/resize:fit:1358/1*3Dsdw-L4qVhT1WkyLvtsPg.jpeg)

![Pytorch] Sampler, DataLoader和数据batch的形成- 知乎 Pytorch] Sampler, DataLoader和数据batch的形成- 知乎](https://picx.zhimg.com/v2-3da901255666b8290485fc7a41a57982_720w.jpg?source=172ae18b)